An SEO audit seems to be something very technical for many bloggers. Of course, it’s partly true, but we are happy to help you on your way, if you have decided to take your blog’s SEO to the next level. There is always room for improvement on every website. Do you have any idea, if your website contains duplicate content? Do you doubt whether your website is mobile-friendly? Never heard of an XML sitemap? Read this blog post and all these SEO concepts will become a lot clearer for you.

Why technical SEO aspects important?

Imagine you have a website with a lot high quality content, which can “convince” other quality websites to link to you. Ready? Of course not, because if the Google bots can not properly crawl your pages, the latter will also not be indexed. These will, therefore, not be shown in the search results, that is why it is essential to become more aware of the technical aspects of SEO.

Which SEO aspects should you check?

Identify crawling issues with a crawl report.

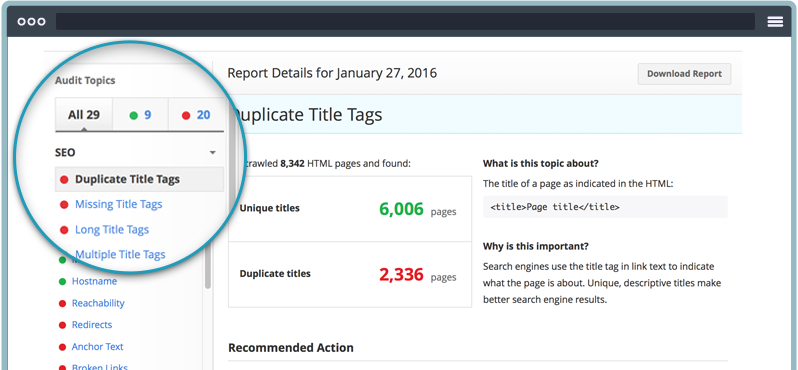

By making a report about your website, you can discover your status will regards to all the technical SEO aspects of your website or blog. It can tell you a lot about possible duplicate content, the loading speed, the page titles, the H1 and H2 tags, etc.

There are all kinds of handy tools like Screaming Frog for this, most are either totally free or free with limited report options. Unless you run a huge website with heavy traffic (and heavy profit, I assume?) most free SEO audits reports will cover all your needs.

Crawl & index

Search engines do three things:

- Crawl web pages looking for relevant content for specific searches.

- If relevant enough, the page is indexed with the content, the meta-information and the location of that page.

- When someone does a specific search in Google, the most relevant search results are displayed based on the index.

In other words, “not crawled” means no indexing and therefore no chance to be shown in the search results. So find out which pages of your website are not indexed and try fixing it as soon as possible.

How can you know, which pages were indexed by Google?

You can get a rough idea by typing in the search bar: site:yoururl.com – (without typing http: // www. ) However, this method is not 100% right, since you can have more pages indexed in Google.com or less in Google.ca or even less in Google.es

If your website is not at the top of the search results, it is entirely possible that you prevent your own website from being indexed (with some kind of SEO mistake you make).

If you want more accurate results, use Google Search Console. This tool not only tells you how often your website gets crawled, but also how many pages were actually indexed (so far).

Another good idea is to check Google Analytics (Reporting> Acquisition> Search Engine Optimization> Landing Page) where you can see the number of unique pages that were crawled by the Google bot at least once.

Check the HTTPS status codes.

Making all pages on your website https is an absolute must. Come on, it’s nearly 2019! If you do not do this, neither robots nor visitors will have access to your web pages. They will only see 4xx and 5xx http status code. Whether you like it or not, SSL certificate has become a standard and if you don’t have one already, you only sabotage your own efforts.

Security aside, https is a ranking factor for Google. So if you still have pages with http, they will not do well in the search engines.

→ Add credibility to your online business! Get an SSL certificate from only $8.88/year at Namecheap.

Also, check via Google Search Console for any other status code errors and resolve them as soon as possible.

Robots.txt

This is a file that you can upload on your website, thanks to which you make clear to Google bots, which pages they may not be allowed to crawl. Think, for example, about the checkout page of your eshop.

Or you can, for example, use this file to prevent specific search engines from crawling your website. Know that crawling your website can also be detrimental to the loading speed.

At the same time, use Google Search Console to check whether this file does not inadvertently prevent specific robots from crawling your website and the pages you want it to crawl and index.

Check XML sitemap status

With the XML sitemap, you actually create a list of all your website’s pages to Google (and other bots), which makes it easier to find your pages.

Once your sitemap is ready, you can submit it to the Google bots thanks to the Sitemap tool in Google Search Console.

Canonicalization

Suppose you have different pages on your website, each with a unique URL that contains almost identical content. Then a canonical tag is indicated. If you do not use this, then this is detrimental to the page authority of the pages in question.

The link value that your website would otherwise receive via an inbound link to one page is distributed without canonical tag over the pages with identical content. SEO-wise it is much more appealing, that only one page would receive the full link value of the inbound link. Am I making myself clear to those, who read it for the first time? Besides, these pages with identical content, yet without a canonical tag, are considered duplicate content. That, in turn, is crucial for your visibility in the SERP.

To avoid all this, it is best to set a rel = “canonical” tag. Just place this in the html header. This way, the search engines know which page is the most important of all pages with the same content.

Loading speed

Another critical checkpoint to consider in your SEO audit, is the loading speed of your website. Slow loading speed has a critical negative influence on the user experience. It also ensures a higher bounce rate and a shorter time spent on page. Let the latter two just become two ranking factors that search engines use.

To know the loading speed of a URL, you can use Google PageSpeed Insights.

The loading speed has gained even more importance after the introduction of mobile-first indexing by Google. The general rule is that the loading speed of a page, desktop, and mobile, should be less than 3 seconds.

Here are some tips:

Use the Page speed Insights or GTMetrix tool mentioned earlier to find out which pages load slowly, what is the cause (for example – too many java scripts, large images, etc) and most importantly – how all these issues can be solved. Be sure to check whether the current hosting of your website delivers the most optimal loading speed for your website.

→ Ready to start your online business? Try Namecheap web hosting for less than the cost of a domain

Make everything on your website as compact as possible. For example, go as much as possible for compressed images that are smaller than 50kb. Empty the caching of your website regularly. Look for broken links and delete them. Pay extra attention to a clean, neat HTML.

Mobile

Yes, mobiles are taking over the world. Google has already been rewarding websites that are mobile-friendly with a possible higher ranking in the SERP for three years now. A website that also has a good loading speed on mobile, a good clear structure, and responsive design is therefore crucial.

Do not be tempted to make two separate websites. It is not only much more expensive but you also immediately have twice as much work with updates. Never make that mistake. Making your website template or WordPress theme responsive will save you tons of work.

Take care of clear call-to-action buttons and avoid the use of pop-ups, flash, zooming, etc.

Check for duplicate keyword usage.

The search engines get confused when they find two pages on your website that bet on the same keyword. This can result in a lower CTR, lower page authority and a lower conversion rate.

To find out, which pages on your website target the same keyword, check the performance report in Google Search Console.

Check the meta descriptions.

Look for duplicate meta descriptions and even worse – missing meta descriptions. Especially the first is a familiar and fairly common SEO problem with huge websites with many pages. Use an SEO tool like Screaming Frog to discover the meta descriptions of your website. Then take sufficient time to write a unique meta description for a number of important pages.

Length of Meta description

When you are still editing the pages with the same meta descriptions, you can immediately view the length of these meta descriptions. It can affect the CTR from the SERP to your pages in a positive way.

In thebeginning on 2018, if you have been following the tech news, Google has been joking about the length of these meta descriptions. Oh well, Google.

Check for broken links.

The last but definitely not least thing that you can fix are the so-called broken links. These broken links not only waste a piece of your crawl budget, but they also create a negative user experience and result in a lower ranking (think “bounce rate”).

I often use Dead Link Checker to discover the broken links on loreleiweb.com.

Conclusion

So, as you can see, there are a lot of SEO aspects that you can include in a thorough SEO audit of your website. Just be patient and remember that SEO is a process, a never ending journey, not an end goal.

Good luck and hopefully you haven’t been overloaded with information in this article.